Microprocessor Report on Autonomous Vehicles: Mobileye Increases Car EyeQ

by On Nov 29, 2016

I am often asked by engineers who are not experts on autonomous vehicles exactly how the semiconductor systems in a car work as the brains of an autonomous system. A follow-up question is usually about vendors’ current products and market trends.

I am often asked by engineers who are not experts on autonomous vehicles exactly how the semiconductor systems in a car work as the brains of an autonomous system. A follow-up question is usually about vendors’ current products and market trends.

I often start of by describing the current state-of-the-art in advanced driver assistance systems (ADAS), the importance of functional safety standards for these systems, and then talk about the technology gap we need to traverse to be able to create fully autonomous systems. I invariable end up babbling incoherently in a flurry of abbreviations and acronyms: NHTSA, ISO 26262, SEooC, V2I, CNN, etc.

No more! I found this great article by Microprocessor Report’s Mike Demler that is the best introduction I’ve seen that describes the role and functions of semiconductors in driverless cars, and also describes how current ADAS systems differ and what are the market trends. The title of the article is, “Mobileye Increases Car EyeQ: Computer-Vision Processors Will Enable Autonomous Vehicles“, and it is available for free at http://www.linleygroup.com/mpr/article.php?id=11437.

The reason I am writing about it here is that it is rare to find this kind of detailed but succinct analysis for free online. Information like this is usually only available behind a pay-wall or in an expensive market research report.

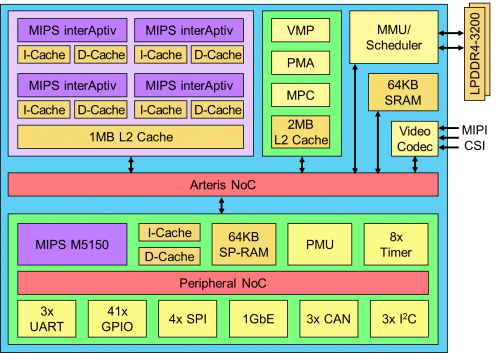

In addition to describing Mobileye’s EyeQ4, Mike also writes about Nvidia, NXP (former Freescale), and Synopsys ADAS technologies.

Although this article is over a year old, it should be the first thing you read as part of your self-education in how autonomous vehicles work!

{{cta(‘b38be5c2-b95d-4efb-90ff-35c22116f50b’)}}